Rethinking Measurement: A Call for Meaningful Dialogue in Analytics

Over the weekend a friend and former colleague of mine tagged me in a LinkedIn post titled 5 bullshit metrics you need to stop using to measure content marketing success. In it, the author details 5 digital metrics he thinks content marketing folks need to chuck out the window. Now, me being the acquisitive analytics nerd that I am, I couldn’t resist a good rant calling bullshit on some bad metrics. So I clicked the link, spent the next 10 minutes reading the post, and subsequently the better part of the next few days penning this response.

Needless to say, I have some bones to pick.

Before you continue reading I recommend you go and read the original post, link is above.

The article was penned by Daniel Hochuli of LinkedIn, and before I go any further I want to be clear about my intentions here. I don’t want this post to come off as dig into Daniel or his views. I actually think he raises some good points in the article and that he generally had the best of intentions. Daniel also happens to create some awesome content on his LinkedIn feed, which you should check out.

This article, however, gave me pause. Primarily because it plays fast and loose with some key concepts and definitions in the measurement space, and because it doesn’t quite go deep enough into the issues it encourages the reader to act on (i.e. metrics you should stop using altogether).

As someone who has spent years working in the analytics field, I feel strongly that it’s time for us to level up. Effective measurement is no longer just a job for the data experts, as we need to start building a strong foundation in measurement fundamentals across the marketing domain, whether you're an account exec, brand manager, technologist, creative or CMO! And this starts with having more substantive and meaningful conversations about how to measure effectively.

Hopefully, by now you’ve read Daniel’s article. But just to recap, these are the 5 metrics he called out:

Impressions

ROI

Bounce Rate

Benchmarks

The Funnel

Let’s go through these one by one. But just FYI, this is going to be a long-ish read, so grab a fresh cup of coffee and strap in.

1 - Impressions

When defining impressions, Daniel said the following:

“[Impressions are a] metric you use to report the number of people who saw your content and didn't engage with it! That's what an impression is. In other words, it's the number of opportunities your content missed.”

I have a few things to address here. First, he's using a fairly broad definition of impressions that seems to be platform agnostic. But impressions isn't a universal metric that exists on every channel, and for the channels' impressions is available on it can be quantified differently. So we need to be careful about using the label impressions as a catch-all for exposure.

When we talk about metrics, we should always specify the channels or platforms that we're focused on. And if we're talking about metrics more broadly, such as exposure or awareness based metrics, then we should be clear about this.

For example, impressions is a metric available on social networks like Facebook and Twitter (and is obviously hugely common in the digital display and SEM world). But you can't find an impressions metric when looking at your website or blog content analytics, as tools like Google and Adobe analytics don't support it. Instead, web analytics tools tend to focus on metrics like hits, visits, visitors, and pageviews (if we're just talking about exposure). Sure, pageviews might be broadly comparable to impressions, but they're not the same. And I'm not sure if Daniel was referring to impressions more broadly as a catch-all for exposure/awareness based metrics (which would include channels like .COM), or if he was just calling out impressions on specific platforms, like Facebook. Furthermore, if he was focused on channels that support impressions, like Facebook, what about other exposure/awareness based metrics, like Reach? Should we stop using these as well?

Note. Confusingly, Facebook still refers to Post level Reach on the Facebook Insights UI. But if you export data, post reach seems to be called Impressions Unique, or specifically post_impressions_unique:lifetime. Not sure when this started, but since they still use Reach on the UI I think it's still a valid label.

Finally, the definition of impressions above isn't quite right, particularly where it stated that the metric can be used "to report the number of people who saw your content and didn't engage". That's not technically true. Whether we're talking display advertising or Facebook posts, impressions basically act as a counter for every time your content was served (i.e. loaded within the page). But the metric itself makes no distinction between impressions that led to an action (e.g. a click, a like, a retweet) and impressions that didn't.

To actually quantify something like, as Daniel puts it, the "number of opportunities your content missed" you'd actually need to distinguish between engaged-impressions and non-engaged impressions, which can be a bit tricky. One way to do this might be to subtract total clicks from total impressions, but this isn't perfect as it could double-count people who saw and/or engaged with your content more than once. A slightly better approach would be to employ unique metrics, like subtracting People Engaged (aka post_engaged_users:lifetime) from total post Reach on a platform like Facebook. This would give you a more accurate reflection of 'missed opportunities', if you cared about that sort of thing.

All the same, is it even worthwhile to think about exposure in terms of missed opportunities. We usually tend to look at this in the inverse, like an engagement rate (which also isn't supported natively on many social platforms). Regardless, whether you're looking at people engaged or people not engaged, it's important to be clear that impressions simply give you a base of exposure to work with, nothing more. Whether you need to tap into that base for measurement is entirely dependent on your needs.

So what should you do?

When it comes to choosing the right metrics it helps to have a framework. The one I follow buckets marketing metrics into 5 different categories, which include awareness (i.e. exposure) engagement, perception, experience, and conversion.

I wrote about these metric categories on my blog a few years back so if you want the juicy details go give it a read.

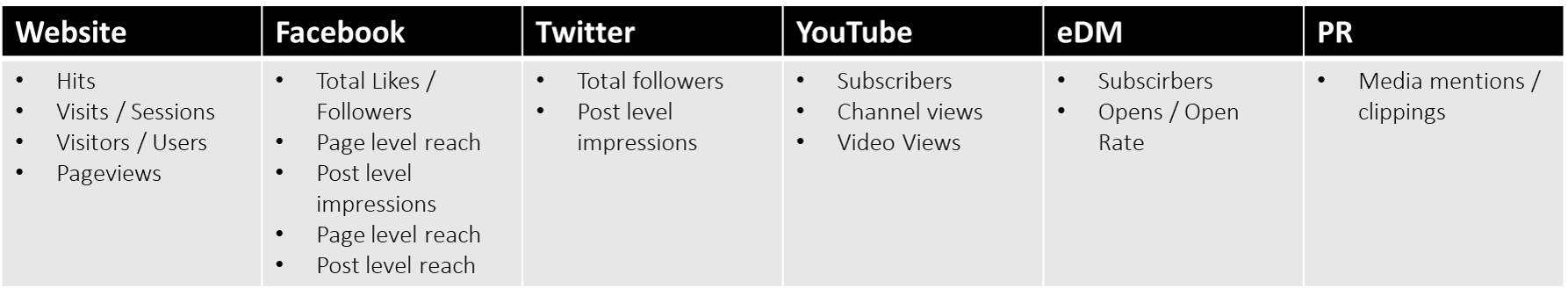

For the moment I'm just going to focus on awareness metrics because I think that’s what Daniel was referring to more broadly, as opposed to impressions as a specific metric. For reference here are some examples of common awareness metrics across a few different channels you might use to distribute content (note. this is far from a comprehensive list):

Awareness metrics certainly have value, but only when used in the right way. In fact, I probably see awareness metrics being misused more often than metrics in other categories. This is because awareness is usually the first place one looks for quick and easy validation that makes you look good (i.e. vanity). After all, saying you drove 1 million impressions sounds pretty nice, right? Well, it doesn't sound so great if those million impressions only drove 10 clicks.

To overcome this I typically train my clients on something I like to call anchoring. That is, you anchor one metric (i.e impressions) to another (e.g. clicks, CTR) with the goal of achieving a more holistic understanding of your performance. And this is exactly where awareness metrics, like impressions, can offer value or insight.

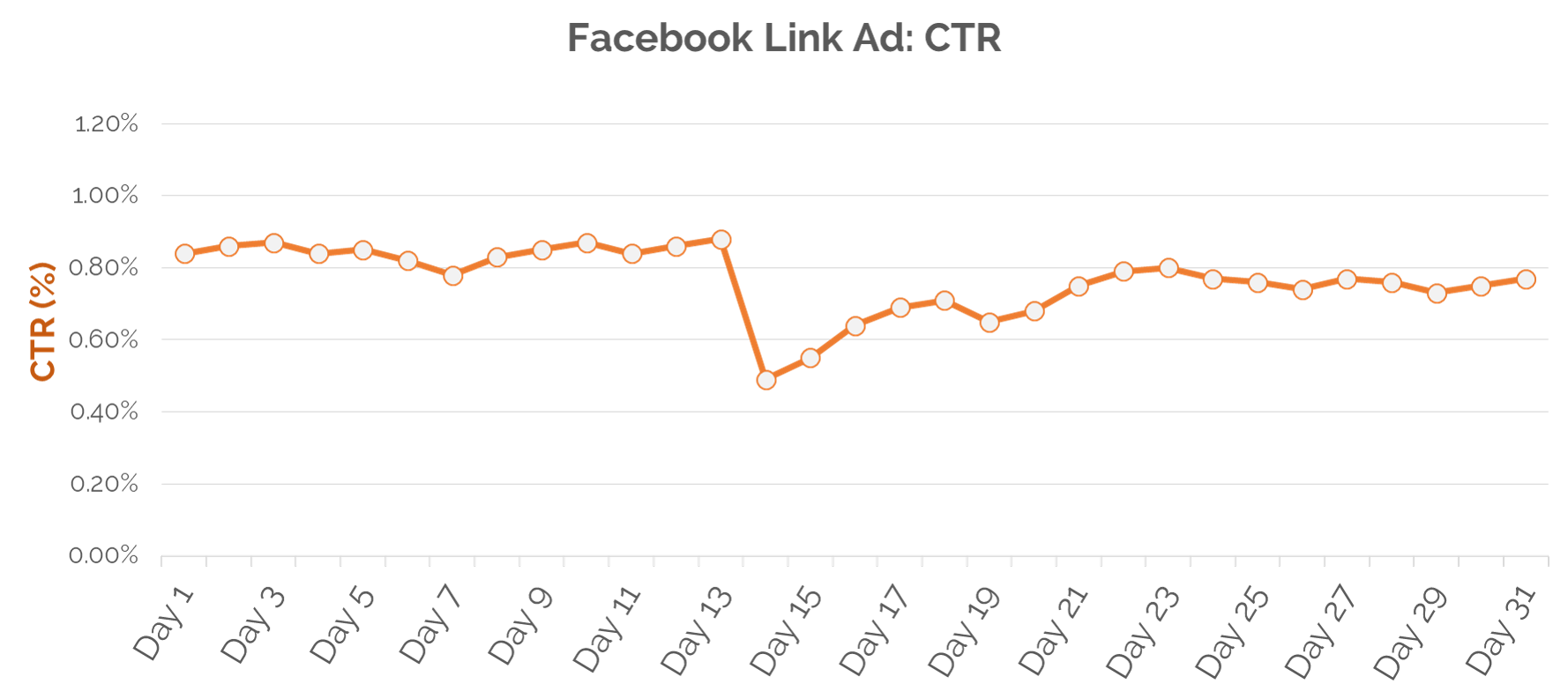

Let's put this into a working example. Below is a chart that shows the clickthrough rate on a Facebook Link Ad over the course of 31 days. This ad contained a link that directed users to a blog, so clickthrough on the ad was the primary goal.

One look at this chart and it seems to tell a grim story. CTR was steady around 0.8% until day 13, then it tanked to almost 0.4%. It rallies after the drop but never quite recovers to the level it performed at during the first 13 days. Things aren't looking good here.

But let's anchor this to another metric, impressions!

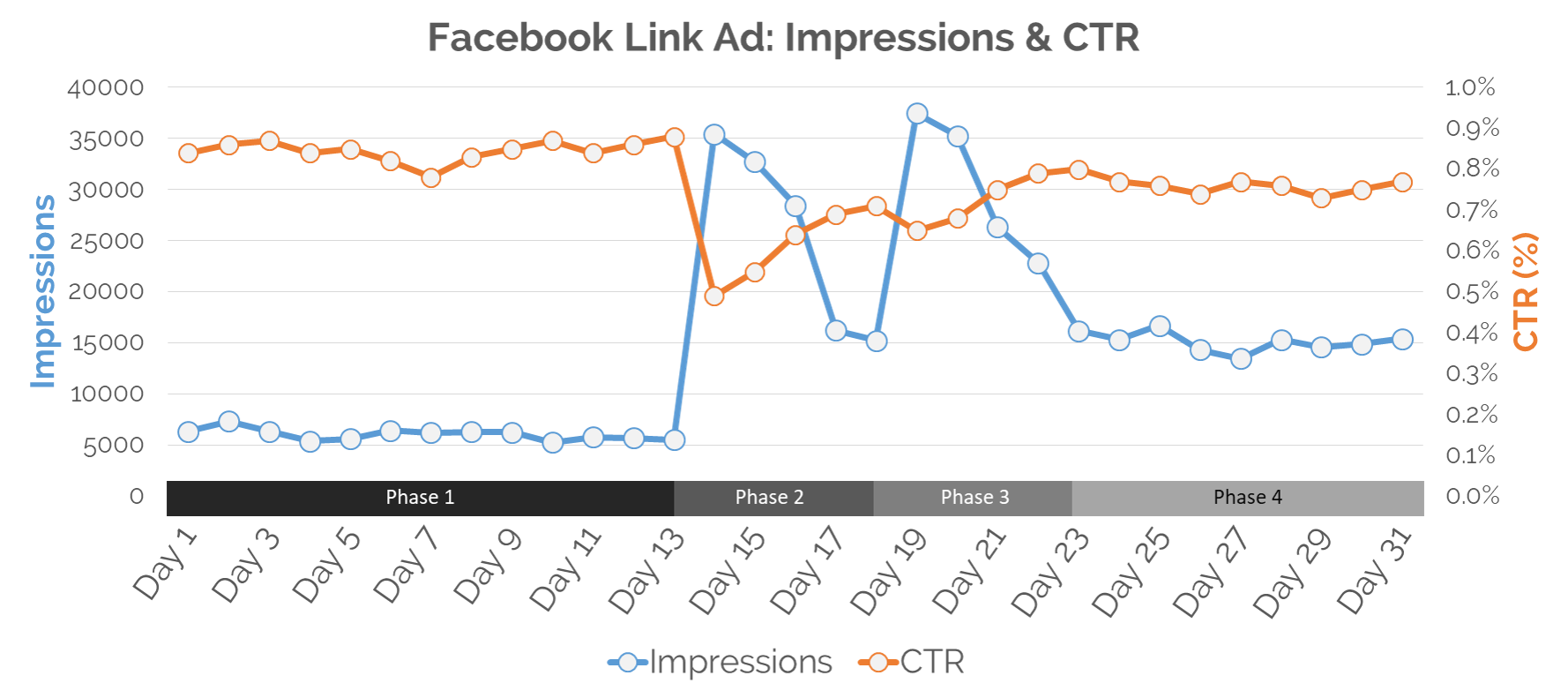

Now this chart tells us a lot more. What happened here? The ad ran with a max daily budget for the first 13 days (phase 1) utilizing CPC as the buying model. On day 14 (phase 2) the brand team decided to optimize the ad creative and they increased their spend limit. They did this a second time on day 19 (phase 3), and then from day 23 onward (phase 4) they lowered their daily budget again, but with a slightly higher daily spend cap than phase 1.

What's interesting here is that you can see the effect that the spend increases and ad optimizations had on CTR. The first ad optimization on day 13 (phase 2) resulted in a surge in exposure due to the increased budget, but this resulted in a considerable drop in CTR, which was due to poor targeting. For the 2nd wave of optimization on the 19th (phase 3) you can see that CTR dropped again compared to phase 1, but actually performed marginally better than the first spending spike on the 19th (phase 2). And finally, for the remaining days from the 23rd onward (phase 4), CTR hovers around 0.77%, which is around 10% lower than phase 1, but they were driving around 150% more impressions during this time. So the dip in CTR in phase 4 compared to phase 1 is actually a pretty good trade-off.

The story that this chart tells is one of progress. The brand team made some changes to their ad execution, it didn't work, so they tried something else, and kept trying until they saw results. If you were to apply a linear trendline to the CTR curve in that chart, it will trend downward. But the truth of it is, relative to what was happening with their spend and exposure, they improved over the course of the month.

Hence, anchoring!

So to recap, don’t write off awareness metrics entirely, including impressions. But when you do use awareness metrics, don't look at them in isolation and try anchoring them to something else, like engagement metrics (e.g. CTR, engagement rate, etc) or conversion metrics (e.g. transactions, conversion rate, etc).

2 - Bounce rate

Here’s a snippet of what Daniel said about bounce rate:

“This is how bounce rate works. A person arrives on a page, they are on that page for an indefinite amount of time (could be one second, could be ten minutes) and then they leave the page without performing another action. 100% bounce. This is how we read articles online."

So this isn’t a great definition of bounce rate. The basic idea is there, but it tends to oversimplify what bounce rate actually is. Look, I know this isn't the most thrilling topic, but it's always a good idea to dig into some of the metrics you use often to better understand what they quantify and how. And for a really thorough and incredibly insightful read on bounce rate I highly recommend this post by Yehoshua Coren over at Analytics Ninja.

But to be honest I was a bit divided on this one. After all, bounce rate is definitely a metric that marketers who don’t understand measurement cling to. It’s generally easy to understand and to apply, in theory. I’ve also experienced much frustration from clients wanting to only focus on bounce rate when other metrics were more appropriate. And I think that's exactly the sentiment Daniel was going for, which we would agree on. But to say, never use bounce rate as a success measure for content marketing is a bit of a stretch for me.

I agree that it’s acceptable to have a high bounce rate or to even ignore the metric completely for content-heavy sites (i.e. media, blogs, etc). But whether you use it or not really depends on your channel strategy and objectives.

Let's take Etsy’s blog, for example.

They publish content on a diverse range of topics, from featuring their sellers to lifestyle trends to how-to/educational content. But each one of their blog posts link to a product or seller on their core site. Thus, the goal of their content is twofold – 1) to build a relationship with their audience and customers, and 2) to drive sales. I would imagine that Etsy probably do look at bounce rate, and that given how connected their blog content is to their product catalogue, they probably want to see lower bounce and/or exit rates.

Kiss Metrics is another great example. They sell analytics software and run one of the most popular analytics blogs in the industry. They have a big investment in content marketing, from editorial to creative production. And I think it's fair to assume that the reason they invest so much in content is because they hope to convert some of those readers to buyers. Of course, it's not always about sales. The KM blog builds its brand as it helps establish authority and credibility - and that is something you can't put a dollar value on. But their content does serve as a direct avenue for driving leads. They usually include a call-to-action at the end of each post that leads to a product landing page.

Like Etsy I wouldn’t be surprised if the Kiss Metrics team use bounce rate to better understand how much of their blog content is driving traffic to other destinations on their site, like products and services pages.

So what should you do?

Like impressions or awareness metrics in general, don’t completely toss bounce rate out the window. You should take the time to understand how bounce rate works (or make sure someone in your organization does), and then decide whether it's the right metric for you given your channel strategy and objectives.

Fun side note, I find that many people who are using Google Analytics and who have custom events firing on their website aren't aware that events can meddle with how bounce rate is calculated. I’ve met numerous clients who didn’t realize that the bounce rate figure they've been looking at for years was flat-out wrong. So before you decide whether bounce rate is right for you, make sure it’s being quantified correctly! Here's a really helpful post on this issue by Luna Metrics = Non-Interaction Events in Google Analytics.

3 – Return on investment

Here’s a snippet of what Daniel said about ROI:

“The content consumer and the customer are rarely the same person. They are two very different audiences with different behaviours. One has informational intent, the other has transactional intent. You need to align your goals and expectations around these behaviours. While sales do occur from content, the reality is, it is not a common action."

Ok, I have two issues here, one around the general framing of content consumer vs customer behaviour, and another about the underlying message that we should ditch ROI completely.

On framing, that first sentence about consumers and customers rarely being the same is a bit of a handful. I agree, not all content consumers are going to be customers, and likewise, not all customers are going to be content consumers. But there is certainly overlap, and depending on your channel mix and content strategy the overlap may not be small, or “rare.”

I think it’s fair to say that if you’re investing in content marketing you probably want a good chunk of your audience to be current or potential customers. Not 100% of them, but most. I mean, if you told me that you ran a blog for your brand with no intention of driving any form of purchase intent, I'd say you’re nuts. This isn't to say that each piece of content you publish has to drive purchase intent or generate some kind of monetary value. You can and should create content that's just about building brand affinity. But that doesn't mean you can't design your content to speak to both your audiences' "information" needs and "transactional" needs at the same time.

Now, onto the point about ditching ROI altogether. Listen, I know ROI is a sensitive topic in our industry. It's elusive, complicated, frustrating and can be pretty damn expensive to do well, ironically. But let's exercise some caution before collectively agreeing to ditch any form of ROI as it applies to content. The "ROI of your mother" video Daniel shared of Gary Vaynerchuck is awesome, and is a talk I've referenced myself many times when arguing, ahem, discussing how to get to the bottom of returns with clients.

But I don't think Gary was suggesting we should ignore ROI entirely. Instead, we should resist meaningless pursuits of ROI, such as trying to put a dollar value on a tweet, download or visit. These types of engagement are usually far too granular to be correlated with or attributed to sales. Unless your content links directly to a sales opportunity, like Etsy! In which case could actually put a dollar value on your content, if you needed to.

The truth is, sometimes your content creates purchase intent, and sometimes it doesn't. Sometimes, the needs of your content consumers and customers intersect, and sometimes they don't. Sometimes, you can quantify the value of your content based on how many leads or sales it drove, and sometimes you can't. In the end, we have to be better at distinguishing between a meaningful and meaningless pursuit of ROI, so we focus on measuring what matters.

So what should you do?

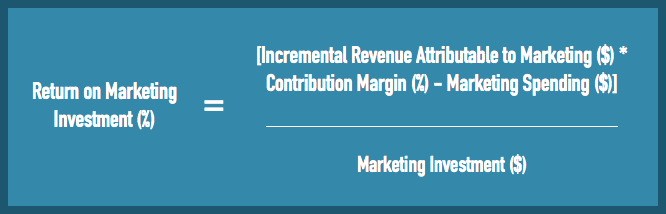

ROI is a tricky one. It’s less of a universal metric and more of a business question (e.g. what was my return?), and it applies different types of metrics to answer that question (i.e. spend, revenue, etc). If we’re looking at marketing ROI, the equation is generally accepted as this:

But the equation here is the easy part. Getting the data you need to solve this equation is hard, REALLY hard.

For example, knowing which financial measures to count within marketing investment is no simple task (e.g. do you just look at ad spend, or total cost of sales, people, production, etc). And getting your finance team to cut this data the right way isn't usually a simple request.

Another challenge with ROI is attribution, which is the process of linking certain outcomes to activities in your marketing execution. I can't solve your ROI or attribution woes here, but I can tell you that almost any attribution expert will agree that ROI isn’t a blanket metric that can or is applied generally. And quantifying ROI accurately takes time, resources and creativity.

Going back to my point above, you should absolutely steer away from meaningless pursuits of ROI (e.g. dollar value of a tweet). But, that doesn’t mean you can’t (and shouldn’t) pursue ROI for something bigger and more meaningful, like measuring the returns of your entire content program. This is entirely feasible, albeit, complicated. Whether you want to pursue this is up to you, but I know many clients who have invested heavily in developing ROI models for their content program. And most of them will tell you that ROI in this context was a worthwhile pursuit.

Where can you start? Go find yourself a marketing attribution partner or expert and start the conversation by telling them what you want to accomplish, or what your c-suite is asking of you. In the last five years the attribution space has evolved so it shouldn't be hard to find a full-service attribution agency, provider or consultant to help you. But on that point, be critical about of who you partner with. Be cautious of an agency or firm that doesn't have a track record in attribution. You need an experienced and professional partner, with proven methodologies and a track record of doing this kind of thing.

4 – Benchmarks

Here’s a snippet of what Daniel said about benchmarks:

“All industry benchmark reports are bullshit.”

So here’s my problem. A benchmark isn’t really a metric, it’s a dimension of a metric which is usually based on a time interval (e.g. month over month, year over year, etc). I wrote a pretty extensive post about using benchmarks on my blog, which you can read here. I won’t go into detail now, but in the post I highlight 3 types of benchmarks; historical, competitor and industry.

Daniel clarifies that he doesn’t think all benchmarks are ’bullshit’. Instead, he says you should “only benchmark against your own previous performance.” I generally agree with where he’s going here, as I often tell clients they should always start with historical benchmarks first before thinking about competitors or the industry.

But to say that all industry benchmarks are useless isn't true. Mailchimp, for example, publishes a list of email marketing benchmarks every year broken down by industry, that I find super helpful. These numbers are based on Mailchimp’s actual customer data, and they give you a pretty good read on what success should look like in the world of email marketing.

Imagine you were tasked with starting email marketing at your company and had never done this before. You sign up for an eDM tool, pull your contact list together and send your first campaign. After a few days, you crack open your campaign analytics and see a bunch of numbers - 15% open rate, 2% click rate, 1% soft bounce, 0.5% unsub - what does it all mean? In this case, industry benchmarks can offer useful context to let you know what 'good' looks like. Hell, even seasoned eDM pros can still find use for industry benchmarks. Consumer digital behaviour is always changing and so these benchmarks can change over time, for better or worse. So having an external point of reference can be extremely valuable.

Even Google Analytics has an industry benchmarking tool, released a few years back under Audience reports. The benchmark report basically takes data from other GA web properties, anonymizes the data, and allows you to compare your website’s performance to other sites based on traffic volume and industry. Again, these are industry benchmarks, and it’s pretty awesome data to have.

So what should you do?

Listen, I’m pretty sure Daniel was just trying to say don’t become overly reliant on competitor and/or industry benchmarks. Which I wouldn't disagree with. But coming out and saying all industry benchmarks are garbage and that you should NEVER use anything other than historical isn’t fair. There are plenty of scenarios where it would be entirely appropriate, and useful, to look to your competitors and/or industry to put your own performance and growth in context.

5 – The Funnel

Here’s a snippet of what Daniel said about Funnels:

“Ok. This is a strategic framework, not a metric but... We marketers like to tell ourselves that people move down the marketing funnel to a conversion. Customers will consume top of funnel content, then middle funnel, then bottom funnel.

It's bullshit. They don't do this.”

Actually, no problems here. He nailed this one.

Ok, this post was a lot longer than I expected it to be, so if you've reached the end, high five (and thanks for reading). I think the reason for the length is that I wanted to explore each of these points/metrics thoroughly so you a have balanced point of view on the implications of ditching metrics entirely. I'm certainly not trying to advocate any of these metrics as best-in-breed measures for content marketing. Some of these can be completely worthless measures, depending on your channel strategy and objectives. And that's the most important thing here, the context for when you decide to use the metrics or ignore them. Most of the points I’ve made above really just boil down to knowing when a metric is appropriate and/or relevant to apply based on your objectives. It’s as simple as that.

And like I said at the beginning of this post, we need to start engaging in more meaningful dialogue about data and how it can be applied to our industry. The only time it would be appropriate to write-off a metric entirely is if it’s been determined to be unfit or fundamentally flawed by a broader community of industry experts (take the Barcelona Principles and PRs rejection of AVEs, for example). In the end, we shouldn’t be asking ourselves which metrics we should NEVER use, but rather, which metrics are most relevant to your needs and best suited to measuring performance against your business objective.

Have you had a good or bad experience using any of the metrics mentioned here? Or, which metrics have you found most useful when measuring the effectiveness of content marketing. I'd love to hear your thoughts in the comments below. Thanks for reading.

[UPDATE #1]

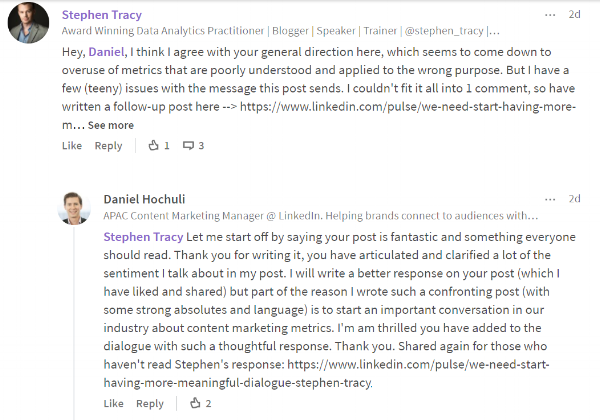

Daniel, the author of the original "5 bullshit metrics" article responded to my post. In fact, he was one of the first to like and the share my response, and he's been a great sport. See his comments below.

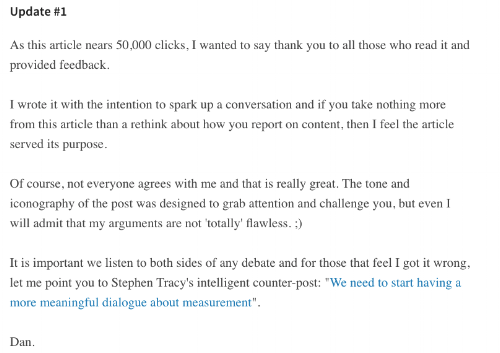

[UPDATE #2]

In addition to Dan's original comment on my post, he's now added a link to this article at the end of his with some nice commentary about the discussion his article has sparked.