Lessons in Survey Design: Using Rating Scales Effectively

Header image by @benchaccounting via Unsplash

A few weeks back I placed an order online with a popular eTailer in Singapore. The order included 4 items, all fulfilled by different sellers so naturally each item was shipped individually. In the end only 3 of the 4 items made it to my doorstep. After checking in with the eTailer's customer service team they told me the 4th item was lost in transit, and so they offered to cancel the order and refund my money.

The day after the refund was processed I received an email from the company confirming the failed delivery (order # redacted in the screenshot below).

Nothing too special here, just an automated email confirming the order cancellation. But my eyes were quickly drawn to that orange rectangular box in the bottom left corner inviting me to "TAKE THE SURVEY". As someone who works in market research and who appreciates the importance of (and challenges with) getting your customers to give feedback, I humbly obliged. So I clicked the link, launched the survey and here's what I saw:

I've redacted the company name and logo from the screenshot above as my goal here isn't to out this company for conducting shoddy research. But there are some serious problems with this survey that need to be addressed, and learned from.

So what's the problem?

The first thing that stood out to me was the scale, particularly the repetition of the labels (i.e. neutral repeated twice, unlikely repeated six times) and their disconnect with the numeric intervals (i.e. 0, 1, 2 3, etc). Rating scale questions are typically used to give the researcher more precision, but they need to be used effectively. And two common characteristics of an effective scale is that the intervals are both gradual and progressive. This basically means that the distance between the intervals are equal, and that each subsequent interval is of greater value than the last (i.e. 2 > 1, 3 > 2, 4 > 3, etc). The scale used above is neither consistently gradual or progressive.

A progressive scale

In the survey above the numeric scale is progressive but the text labels aren't. Labels are often used to provide additional context or instructional cues to the respondent, so in many ways they carry more weight in terms of giving the survey participant guidance. Which makes it all the more confusing when the labels repeat themselves (i.e. unlikely) as there is no discernible difference between them. For example, since unlikely is repeated 6 times, is the participant meant to interpret 4 - Unlikely as being more unlikely than 3 - Unlikely? It seems reasonable that that's what the designer of the survey was gunning for, but it’s still confusing. And this can create issues with how usable the data is when it comes to drawing conclusions. Quantitative research data is only as good as your design and the participants' understanding of what's being asked. If anything is unclear, like say, the practical difference between scale intervals, then this could taint or even nullify your data.

A gradual scale

Aside from label repetition there's another problem with this rating scale. Take a look at intervals 9 - Very Likely and 10 - Highly Likely. Can you tell me the difference between something that is very and highly likely? Because I sure can't. The words very and highly are both adverbs that would typically represent an extreme emphasis of something. So it would have been more effective to draw a clearer separation between the interval labels, like somewhat likely and very likely.

But while we're on the topic of labelling, it's worth pointing out that you don't actually need to label every single interval on this type of rating scale. For numeric, aka interval rating scales, you would generally only label the opposing ends of the scale and let the participant interpret everything in between. Which would look something like this:

One drawback of rating scales is that they can be highly subjective. But this can also play to their strength as having more data points gives you extra room to analyze things like standard deviation, which can help you understand how much variability there is in your data. So rating scales certainly have a purpose, and as I mentioned you don't really need to spell out what each interval means for the participant. In fact, if you can come up with 11 progressive adverbs for likely I'll buy you a coffee, no joke.

Designing surveys with purpose

At first I actually thought the errors made in the eTailer survey were an honest mistake, which would be entirely forgivable. I mean, it seemed plausible that whoever designed the survey only meant to use a 5-point scale since there are only 5 unique response labels. If that was the case, maybe something just went a bit wonky when they published the survey, and it was actually supposed to look something like this:

But then I took a closer look, and after a while I realized that the question design was probably intentional. Why do I think this? NPS.

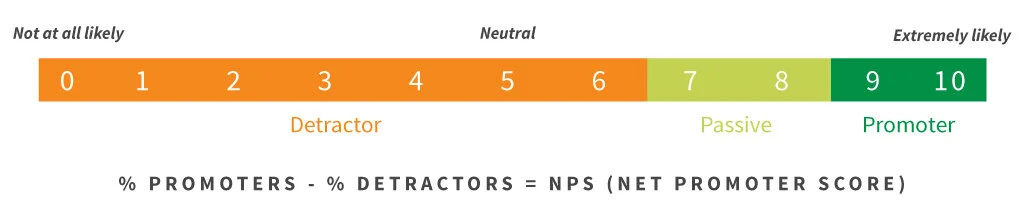

If you're not familiar with it, NPS (Net Promoter Score) is a common approach to measuring brand advocacy among consumers. The research framework is based on asking respondents how likely they are to recommend a brand/product to a family member, friend or colleague in the form of an 11 point interval rating scale. Then NPS clusters all of the respondents who chose 9 and 10 as promoters, the 7 and 8's as passives, and the 0 to 6's as detractors.

Source: www.netpromoter.com

So what’s the connection between NPS and this survey question? Take a closer look.

Here's the eTailer survey question and the NPS framework side by side.

What seems to have happened here is the survey designer quite literally mapped the NPS cluster ranges (i.e. detractors, passives, promoters) to the survey response labels. The problem is that the NPS framework is an output of the research, and is meant to be invisible to the respondents.

Disclosing this kind of information creates 2 problems. First it results in a poor user experience for the survey participant (e.g. confusing repetitive labels). And second, it reveals information about how the participants’ answers will be used.

There’s this interesting concept in psychological and sociological research known as deception. This is a tactic sometimes used to hide the true purpose of the research as a means to reduce the chances of this influencing or biasing the participant's behaviour in any way. Now deception is a controversial topic in the world of experimental research, and one that needs to be addressed with care as it walks a very fine ethical line. And I’m not trying to suggest you attempt to hide or mask the underlying objective of your customer research. Indeed, the intent of this survey was clearly stated (to measure brand advocacy), which is totally fine.

And deception in research isn’t really relevant here. But the notion of hiding certain information about the research from the participant, such as how the data will be used, certainly is. By revealing this kind of information to the participant you could bias their response. For example, if your goal was to measure brand advocacy among a population and you chose to use a rating scale, implying to the participant that their response classifies them as a brand promoter might lead them to change their answer.

You might think this is a good thing because it better aligns the respondent’s current beliefs, behaviour, or recall of their behaviour with the research objective. But that's not really true. Asking someone how likely they are to advocate for a brand and then categorizing them into a segment later is quite different from directly asking someone if they consider themselves a brand promoter. The word 'promoter' has a lot of baggage, and if you're trying to measure something like the likelihood to advocate using a scale, you want your participant to just punch a rating into the answer and not waste time considering whether they see themselves as a 'promoter'.

Look, the average consumer isn't going to know what NPS is or draw the connection I've made between the scale interval labels and the NPS clusters. So the bigger sin here is definitely that of a poor survey design, particularly because the designer seems to have included features of both ordinal and interval rating scales (i.e. labelling each interval on a numeric scale). Frankly, the repetition on the scale and unclear differentiation between some intervals is enough to call into question the quality of the data they’ve collected, and how they can generalize about the results.

This really boils down to making poor design choices when building a survey and not having the right people (i.e. trained researchers) to lead this process. What I think probably happened was someone at this company decided, “hey we should measure brand advocacy and I've heard of this NPS thing”. Then they read the methodology on the NPS website and whipped together a quick survey without fully understanding the methodology. In the end the person tasked with setting up the survey question took the NPS framework a little too literally, and mapped the NPS clusters directly on to the question scale.

The funny thing is most survey distribution platforms like Survey Gizmo and SurveyMonkey already have pre-formatted NPS question types in their library. So it’s hard to understand how this happened at all.

Some closing thoughts

If you're conducting quantitative research it's important to understand that doing this effectively requires more than a contact list and subscription to SurveyMonkey. When it comes to designing surveys in particular, a good researcher will consider a wide range factors, such as:

The underlying research objective (RO)

The purpose of each question and how they relate to the RO

How clearly and effectively the question is presented (e.g. avoiding unnecessary jargon, avoiding leading, steering or double barrelled questions, etc)

Which question type is most appropriate (e.g. multiple choice single or multi select, rating scale, open ended, etc)

The mechanics of the question (e.g. setting response quotas, capping multi-select options, applying skip logic, response piping, etc)

The type of data each question will yield (e.g. binary data vs categorical array vs open ended text, etc)

And finally, the overall flow and experience of the survey (e.g. restricting the volume of response options to reduce cognitive load)

So the next time you’re about to hit publish on that survey, take some time to review it first and consult an experienced researcher.

Have fun and good luck!

If you enjoyed this article then you’ll love my quantitative market research course. Click the link below for more info.