Here's a Full Rundown of My Talk at AMES 2016

Last week I gave a short talk at AMES 2016. You can read a full recap of the event on Campaign Asia here.

I’ve had a few requests to share the full presentation deck, but I’ve realized that some of the slides may not make a ton of sense without the talking points. So here’s a full rundown of the presentation and what I covered.

Spoiler alert, at 3000+ words this post is a little longer than usual. So, if you go through the presentation and there is a particular section you're interested you can skip to it using the links below:

10 Tips For Analytics Success

Here’s the presentation in full:

To quickly recap, the session was titled The Human Side of Analytics and looked at the role of people, communication and storytelling within the big data and analytics industry. I kicked off the talk with 3 trends I’ve observed in the industry globally and within APAC.

The big data and analytics industry is growing rapidly, with annual spending in this sector expected to hit $48.6 billion in 2019.

The technology landscape that supports and drives the analytics industry has become very sophisticated, but the ways in which we think about, invest in and value talent hasn’t advanced at the same pace.

In APAC, the appetite for analytics services and solutions is high, but maturity is still relatively low. By maturity, I like to ground this on 5 key pillars:

Does your business invest/allocate adequate budget for analytics?

How sophisticated are your analytics use-cases (e.g. going beyond basic reporting and pursuing more advanced applications such as multivariate testing or predictive analytics)?

Does your business have the right skills and talent in place?

Does your business have the right organizational structure for analytics (e.g. centralized vs decentralized)?

Does your business actually act on the output of your analytics activities and does this create some form of impact, or business value?

Following these 3 trends, I offered 10 tips for how you can get the most out of your investment in data and analytics.

1 – Ask Good Questions

Success with analytics really boils down to being able to ask good questions, and this is something that fancy software can’t do for you. The reality is that machines are very good at helping us answer complex questions, but they’re not very good at asking them.

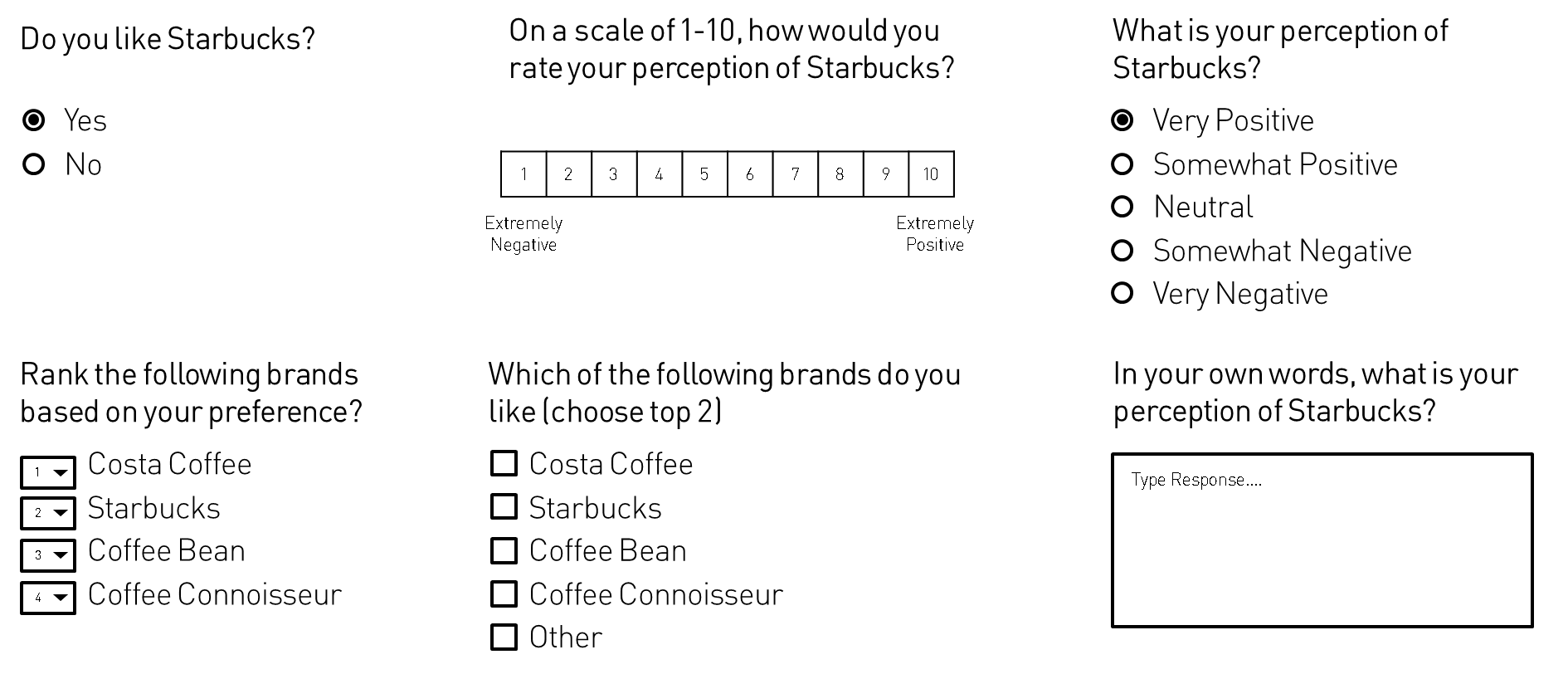

Quantitative research, specifically survey design, is a great place to look for inspiration when it comes to asking good questions. When designing a survey a good researcher will meticulously craft each question to ensure it removes bias, doesn’t steer the respondent into a particular response, and is effective in communicating the question to the participant (and in some cases, hiding the true objective of the question).

In the presentation, I shared an example of a quantitative survey workflow that has been visualized. I love this example because it shows how much effort and complexity goes into research design. In the diagram below you can see the questions and response options, the question routing (e.g. if you answer A go to question Y), as well as additional survey mechanics (e.g. setting response quotas, etc). Also, the coloured vertical lines on the left show respondent segments and map out which questions are served to different groups based on their answers.

Quantitative Survey Workflow

Designing surveys is hard work!

Then I shared another example, which I think demonstrates just how much creativity goes into crafting effective questions. The diagram below illustrates 6 different ways you could ask them the same question when designing a survey, from multiple choice single and multi-select questions to scale and ranking questions, to open-ended text questions.

Survey Design - 6 Ways to Ask the Same Question

2 – Think Long Term

In Econsultancy’s annual 2016 Measurement and Analytics survey, one of the most interesting findings in the report was the fact that 66% of the participating companies stated they don't have a formalized analytics strategy in place. This is like trying to sail across an ocean without a map or compass. Just like your investment in digital, content or eCommerce, your analytics program needs a strategy, vision and road map.

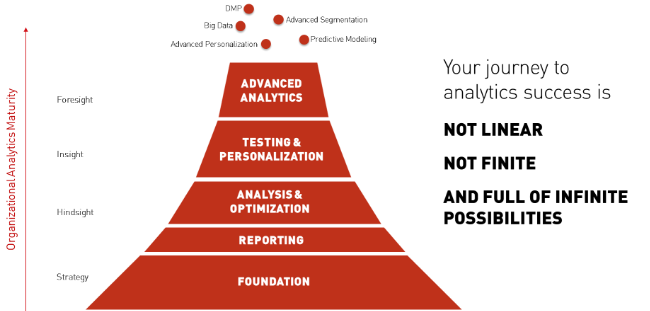

I also shared an analytics maturity framework. The major takeaway here is that you can’t progress to more advanced applications of analytics without having a strong foundation in place.

Analytics Maturity Framework

I also shared an example of what an analytics roadmap actually looks like. A road map needs to consider things like existing analytics capabilities, output, talent and tools so you to map out a realistic path to achieving your goals. For example, if you wanted to build a Data Management Platform (DMP), this could take months or years depending on your current level of maturity. So, having a realistic path to success is key.

3 – Start with People

Just over 10 years ago Avinash Kaushik proposed what he called the 10 / 90 Magnificent Web Analytics Success. Put simply the rule stipulates that for every $10 you spend on a tool you should be spending an equivalent $90 on intelligent agents (i.e. humans). The world has changed a great deal since 2006 when Kaushik first proposed the idea, but I think it’s even more relevant today than it was 10 years ago. As I mentioned earlier, the technology landscape in the analytics industry has advanced considerably over the years. But the ways in which we think about, value, and nurture people in this industry haven’t progressed at the same pace. As a result, many companies are finding themselves in a position where they have lots of great technology and software, but no means to use it.

Kaushik’s rule can be a tough pill to swallow for many business leaders. Just think about it. If you’re currently investing $25,000 per year on analytics software, this means you should be spending at least $225,000 per year on people (e.g. analysts, data engineers, etc). In Singapore (where I work!), that’s easily one manager and 2-3 junior to mid-level analysts. If you are spending $250,000 per year on technology (which is in line with many enterprise-level analytics software subscriptions), you should be spending at least $2,250,000 on humans. That’s a pretty big team!

Avinash Kaushik - 10 / 90 Rule

Not everyone agrees with the 10/90 rule, but suffice it to say that you need the right balance of people and technology for your analytics program to be effective. I’ve met many clients that have the best in breed, enterprise grade analytics tools but not a single analyst to make use of their software.

I often tell clients who are on the verge of a software procurement that they should consider whether that budget might be better spent on a person instead of a tool. We absolutely need technology to create efficiencies. Most of the time analytics software creates value through the aggregation, processing and visualization of data. But the real magic comes from people, and if you’re just kick starting your analytics program and have a tight budget, you’re probably going to get more value out of a good analyst over an expensive tool.

4 – Seek Truth, Not Validation

If you’re really serious about being a data driven business you have to be ready to accept some hard truths. Data can massaged, manipulated or be presented without context in ways that put a positive spin on something that didn’t work very well. I fully understand the pressure to show great results to the executive branch, but if you really want to move past looking at basic applications of analytics you need to be ready to accept bad results and you need to have the willingness to learn from those outcomes and subsequently take a new course of action.

Later in the presentation, I shared actual results from a campaign I worked on a few years back. There was a ton of media spend behind the campaign, and it generated some big numbers in terms of awareness (i.e. impressions, video views, site traffic). These were also the numbers reported back to the executive branch, which in turn propped this campaign up across the business as a model for success. However, if you look at the metrics that measured visitor quality and conversion, you see a very different story. 22+ million and 230K+ website visits resulted in just 103 conversions, and the average visitor only spent 11 seconds on the site. Despite being heralded as a digital success story within the company, the reality was that this campaign was a total failure.

Again, I understand the pressure to report good results up the line. But if you never move beyond vanity metrics and you can’t find the courage to report on failure, you simply aren’t ready to become a data-driven company. The best part about failure is what you learn. And if you can find ways to fail fast, learn from those mistakes and not repeat history, then you’ll probably find that your investment in analytics is paying off.

5 – Understand Your Data

I wrote about the importance of understanding your data in a guest post on Outbrain a few months back (see 5 Data Visualization Lessons For Creating Winning Infographics, section 1 – KNOW your data). The main here is that if you or someone on your team doesn't understand how your data is/was created, what it measures and how it’s structured, you’re at risk of misinterpreting it.

I shared an example of an infographic that featured a misleading diagram that was the result of the authors not having a firm grasp on how their data was structured and how best to visualize it. You can read more about this example, where it went wrong and how not to make the same mistake by following the link above to the Outbrain post.

But generally speaking, the best way to avoid making small mistakes that can lead to a complete misinterpretation of your data is to ensure you or someone on your team can answer the following questions:

How was the data collected? (e.g. poll, census, secondary, etc)

What metrics, data formats and units of measure are included?

How is the data structured? (e.g. by date/time, by category, etc)

How granular is your data set? (e.g. broken down by day, month, year, etc)

Where and how is the data stored? (e.g. 3rd party vendor, owned database in the cloud, on-premise, etc)

6 – Understand the Limits of Technology

When you’re spending a sizable amount of your budget on fancy analytics software you probably assume that the software company you’re paying has taken steps to ensure that the data their tool(s) produce is completely accurate. Sadly this isn’t always the case.

Later, I shared a blog post published by Peter Borden, formerly a marketing advisor at SumAll. His post, titled How Optimizely (Almost) Got Me Fired, details an event where SumAll, who was a customer of Optimizely (an A/B testing solution) at the time, discovered that the tool was reporting false positives. To put it simply, Borden and his team ran a test where they pitted 2 versions of the exact same experience against each other and Optimizely still declared a statistically significant winner. The root of the issue boils down to a combination of default settings in Optimizely (e.g. choosing a 1 vs 2 tailed test) as well as the company not being clear and transparent enough with customers about the risks of choosing one test over another. Borden’s post is excellent and you should absolutely give it a read. But what’s interesting is that Optimizely actually made changes to their software based on his post, and they created a statistics blog as a means to help customers better understand their product and how to run effective tests.

This is probably one of the best examples for why you should never just take the data from an analytics tool at face value. The good news is you don’t have to be a statistician to know when a tool might be misleading or flat out lying to you. You just need to know enough about the data and how it’s collected, processed and stored to understand when it may not be completely accurate.

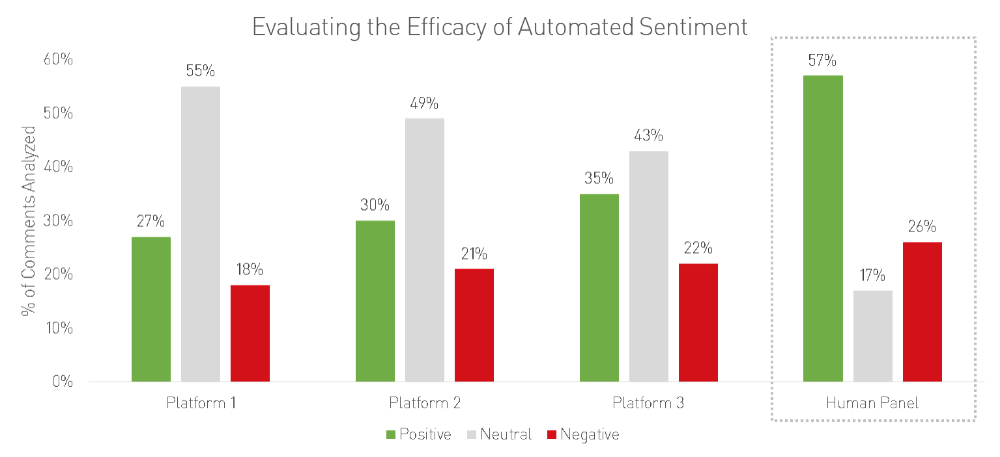

Another space where I often see people being overly trusting of analytics software is social listening, particularly automated sentiment. Automated sentiment analysis is a core feature of many social listening tools, and it’s also the most likely to be wrong. I also shared the results of a case study by Paragon Poll where they conducted a test which ran a sample of 10,000 comments through the sentiment algorithms of 3 social listening tools. However, they also ran the same sample of comments through a human panel, which was tasked with tagging the sentiment of the content (e.g. was it positive, neutral or negative). You can see from the results below that the 3 three automated systems (Platforms 1-3) were relatively close in terms of how they tagged the comments: roughly 31% positive, 49% neutral and 20% negative. However, the human panel tagged the same data as 57% positive, 17% neutral and 26% negative, which is very different from the automated systems. And to be honest, I would trust the human panel any day of the week.

Never make the mistake of thinking your analytics software is going to be right 100% of the time. Whether we’re talking about website analytics or social media monitoring, the quality of your data will depend on a wide range of factors. I won’t get into the details here, but I wrote a post on this a few months back that you can check out if you want to learn more.

7 – Ensure You Have Ownership

Ownership is key to growing your analytics practice. Without ownership, you’ll find yourself running in circles without generating any real impact from your data. During the presentation, I shared five focus areas that your principal analytics lead or team should be owning. This includes:

Vision – Ensure you are on track and pursuing a future vision for how your business uses data.

Accountability - Ensure there is accountability to your analytics output.

Governance – Ensure governance controls are in place and observed as it relates to analytics.

Collaboration – Encourage and drive collaboration across the business to ensure data isn’t stuck in silos

Evangelism - Advocate data-driven thinking across the business and create new audiences and stakeholders for data.

8 – Invest in Storytellers, Not Just Data Crunchers

I’ve been talking about storytelling and the role it plays within the big data and analytics industry for some time now. I like to refer to storytelling with data as empirical storytelling, which I define as process of using data to tell a rich and compelling story. In practice, empirical storytelling requires a proficiency in collecting, cleaning, interpreting and visualizing data, but it also requires someone who can communicate the data and key message in a way that resonates with the audience.

The need for storytellers in this industry has never been greater. I’ve met gifted analysts that were horrible at presenting their work, and I've also met lots of great communicators that just don’t understand data. An empirical storyteller sits right at the intersection of the art and science of analytics.

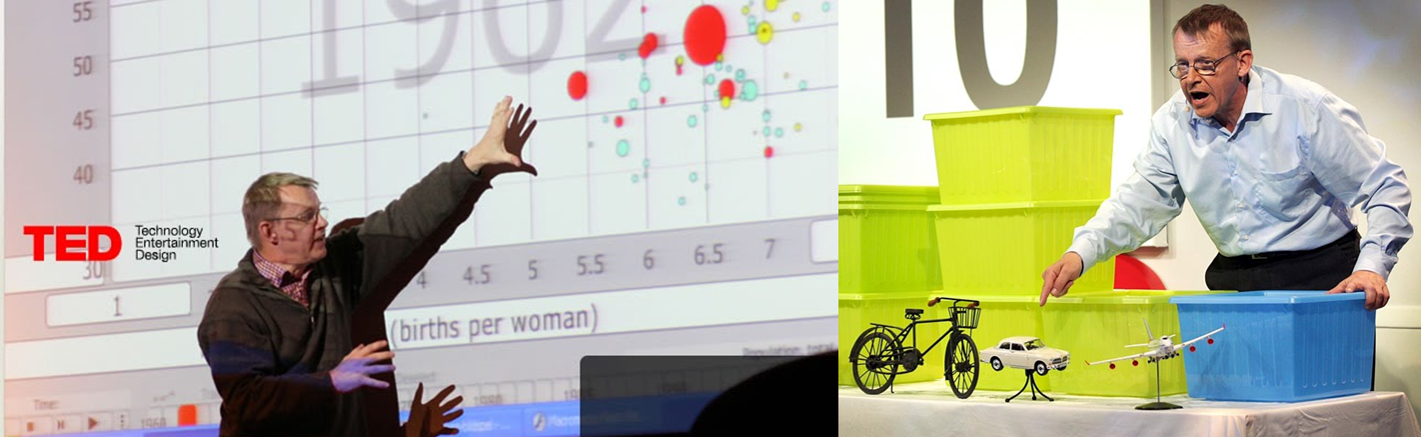

Hans Rosling is probably one of the greatest empirical storytellers I’ve ever seen. Just check out these two different Ted Talks Rosling gave a few years back:

Hans Rosling TedTalk - Insights on HIV, in stunning data visuals

Hans Rosling TedTalk - Global population growth, box by box

The difference between these two talks is a perfect example of empirical storytelling at work. Rosling is a master of both his data and the way in which he communicates it to his audience, and he pushes himself to find new and engaging ways to communicate regardless of the medium (e.g. animated DataViz vs analogue).

9 – Find Meaningful Ways to Communicate Through Data

Reporting on campaign performance doesn’t always need to be done through some boring excel table. In fact, finding new and engaging ways to visualize and communicate data can actually make it more likely to resonate with your audience.

Finding new ways to present data can help you communicate it more effectively and can help keep the audience more engaged. In her book Resonate, Nancy Duarte got it right when she said that

“you can have piles of facts and still fail to resonate. It’s not the information itself that’s important but the emotional impact of that information”

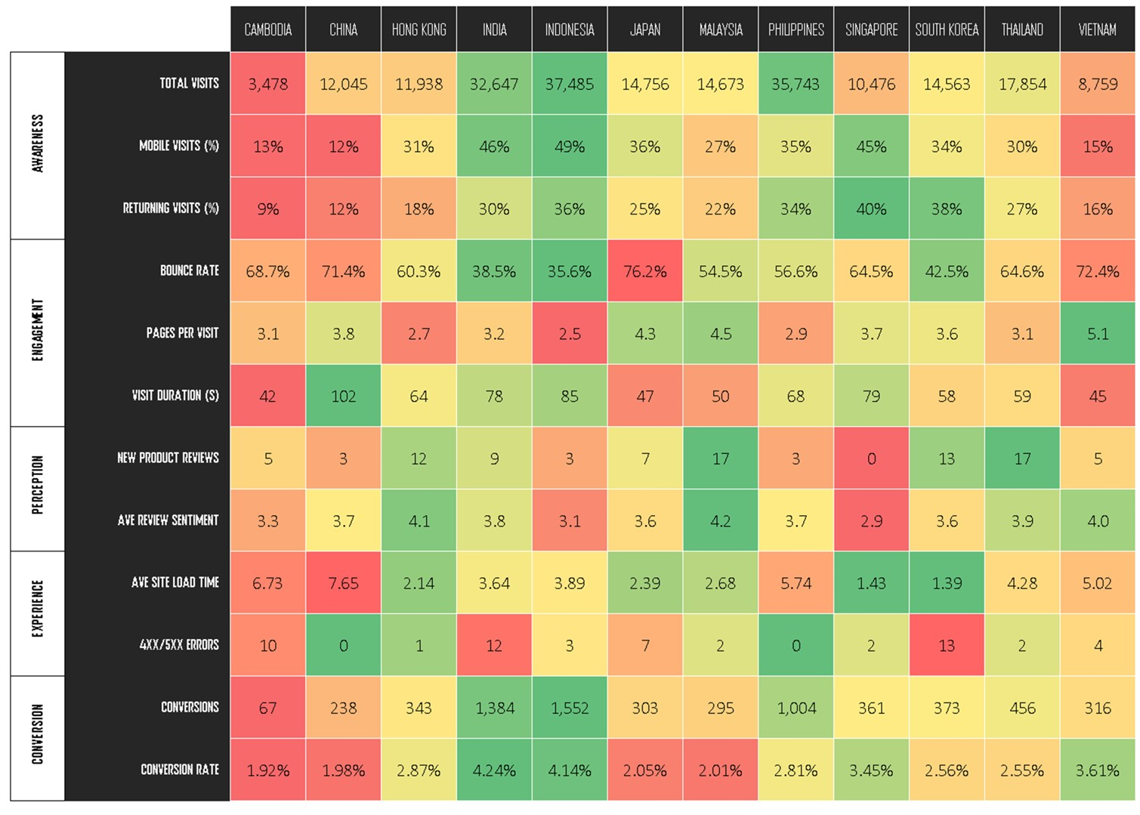

I also shared an example of a campaign dashboard I used for a client recently (though the data has been abstracted). The challenge here was that the group looking at the data was mostly senior and wanted to see how multiple market websites were performing across a range of metrics, and they didn’t want to see a 20+ slide PowerPoint deck. As a solution, we created a heatmap which allowed us to plot multiple metrics across multiple entities (e.g. countries) in the same visual. This worked really well, as the group was able to spot which markets were quickly and were not performing and for which metrics.

A heatmap isn’t going to work for every scenario. In fact, I would say that heatmaps only work well in very specific circumstances. The main point here is that you should select the right medium and design for communicating data based on your audience’s needs, business function, and comfort level with or understanding of the underlying dataset.

10 – Transform Data into Insight

The most important thing you need to understand about data is that it’s useless in isolation. Data is simply a means to an end, it’s the raw material you process and refine in order to create something of value (e.g. insight). And insight needs to enable a business decision. If you aren’t going beyond looking at pretty charts and uncovering real insight, your analytics program is failing you. But even more importantly, you need to act on insight. You can have all the expensive tools in the world and a team of gifted analysts, but if you never optimize, improve or at least experiment based on what your data tells you, you’re wasting your money.

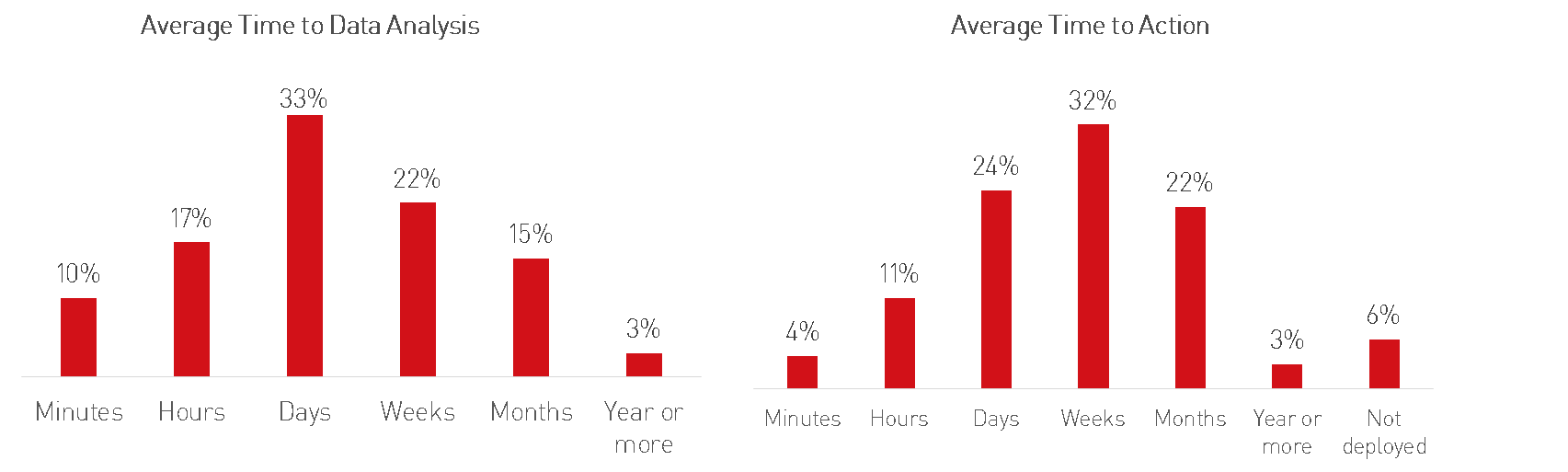

Finally, to wrap up the presentation, I shared the results of some research conducted by Rexer Analytics where they surveyed analysts from across the globe and asked them questions related to how they work with and use data. The charts below show the results from two very interesting questions; what is your average time to analysis (from the time your data is created) and what is your average time to deploy optimizations (discovered through data analysis).

Reducing time to analysis is relatively easy to do, and can be addressed through a mix of the right people and technology/software. However, reducing your average time to deployment is much more difficult as this could involve changing existing ways of working, processes and protocols across different business silos (e.g. technology, marketing, etc).

But before you set out and try and reduce the average time it takes your business to deploy optimizations, a good place to start is to at least make sure your tracking the number of recommendations/optimizations you’re uncovering through data analysis. That way, if your analytics team were to deliver 50+ recommendations and didn't deploy a single one, you'll probably be able to identify which part (or parts) of your business need to change or evolve in order for you to truly become a leaner, data driven business.

Wrapping Up

Phew! That was a lot to cover in a 25 minute talk! Anyways, I had a great time at the AMES this year. There were some great speakers, great people, and some really great work awarded at the ceremony. And if you didn’t get a chance to go this year I would definitely recommend participating next year.

And here are a few photos of my talk. Enjoy!

The human side of analytics? Stephen Tracy of @sapientnitro breaks it down at #AMES2016 pic.twitter.com/9QXayvTD9N

— Campaign Asia (@CampaignAsia) May 31, 2016

The state of analytics in Asia, high interest but low maturity #AMES2016 pic.twitter.com/TrlI5zmCIy

— Campaign Asia (@CampaignAsia) May 31, 2016

We see that our clients are heavy on data/insights but lack capability to reaction fast enough- Tracy @sapientnitro #AMES2016

— Campaign Asia (@CampaignAsia) May 31, 2016

Analytics isn't a department, it's a discipline the spans the organisation - Tracy @sapientnitro #AMES2016

— Campaign Asia (@CampaignAsia) May 31, 2016